Phenomena of fear

Osteuropa 10/2025

Lev Gudkov on the roots of fear in Russian society; translation as survival strategy in Soviet Kyiv; why the EU needs to get real on Belarus; what the Armenia–Iran relationship means for the South Caucasus.

Since 1 October 2017, it is illegal in Germany to spread hate speech online. But serious doubts exist, both about the law’s correct enforcement by internet companies, and whether it will be effective against a far-right that now uses Russian servers and social media channels.

The violence in August this year in Charlottesville shocked the US public and sparked a debate about the influence of extreme right movements on the internet. The central question was where the limits to freedom of speech lie.

Just a few days after the far-right marches, the major web hosting company GoDaddy cut off The Daily Stormer, probably the largest neo-Nazi website in the US, with over 300,000 registered users. Authors on the site had repeatedly made insulting remarks about Heather Heyer, the 32-year-old killed when a rightwing extremist ploughed a car into a group of counter-protesters. Google then blocked the website editor’s attempt to switch to one of its servers. The site then appeared for a time under a Russian domain name.

The choice of Russia was no coincidence. In their ongoing search for sympathizers online, far-right groups all too gladly use Russian web hosting services and network providers. In Russia, they are subject to comparatively few restrictions on racism, anti-Semitism and other forms of hate speech. Using Russian web hosts also allows them to avoid prosecution by the authorities in their home countries.

However, the virtual emigration of rightwing extremists does not mean that their political influence is restricted to Russia – on the contrary. Because their content is not censored in Russia, they can disseminate their propaganda even more widely than elsewhere.

Western democracies in particular are faced with huge challenges: on the one hand, the internet offers far-right movements a range of exceedingly powerful propaganda instruments. With comparatively little effort, they can use social networks, websites and chats to reach a worldwide public. On the other hand, the global internet hampers nations’ ability to enforce laws against hate speech, whose jurisdiction is inherently national.1

Photo: ekvidi. Source: Flickr

Prosecutions for incitement to hatred and defamation have increased sharply in Germany in recent years. In 2014, German police recorded 2,670 cases of incitement; two years later, that figure more than doubled to 6,514.2 There are two main reasons for this. On one hand, far-right propaganda is now spread primarily on Facebook, Twitter, etc. Users now report offensive statements to the authorities more frequently. On the other hand, the political tone has lowered dramatically since the beginning of the refugee crisis: ‘Drop dead, you faggot’; ‘Merkel should be stoned’; ‘Gas the lot of them’ – today, this kind of language is all over the social networks.3

Xenophobia, racism and anti-Europeanism rose sharply in Germany at the height of the global refugee crisis in summer 2015. This was also when the rightwing populist party Alternative for Germany (AfD) began to climb the opinion polls – hitting 20 per cent on some occasions. The German government desperately needed a means to stem this racist tide, which to a great extent was expressed via online hate campaigns.

In autumn 2015, the Social Democrat minister of justice, Heiko Maas, set up a task force bringing together representatives of Facebook, Google and Twitter, alongside numerous NGOs. The committee came up with a set of recommendations for dealing with online hatred. However, these remained largely ineffectual: according to one analysis at the beginning of 2017, YouTube deleted around 90 per cent of content reported by users, but Facebook only 39 per cent and Twitter just one per cent.4

For that reason, in June 2017, the Bundestag passed the so-called ‘Network Enforcement Law’ (Netzwerkdurchsetzungsgesetz – NetzDG). This confirmed that voluntary self-regulation of social networks had definitively failed. The NetzDG is supposed to force social network operators to delete ‘fake news’ and hate speech.5 According to justice minister Maas, freedom of speech would thereby be protected, ‘for today […] online defamation and threats are silencing people and they are thus being robbed of their freedom of speech by these criminal acts’.6

The NetzDG, which comes into effect on 1 October 2017, forces network operators to delete within 24 hours any ‘obviously illegal’ content reported to them. Where content is not unequivocally illegal, the law allows a period of seven days in which to act.7 Only in exceptional cases can this limit be exceeded. If systematic failures to enforce the law occur, the employees responsible risk being fined up to €5 million; companies can be fined – if only in theory – up to €50 million. Additionally, network operators must establish a system so that users can report potentially illegal content. Any social network with more than two million users must also appoint a contact who the authorities can deal with. This move spells an end to the ‘facelessness’ of Facebook and co.

In Germany, restrictions on free speech are much tighter than in the United States. Simply put, in the United States freedom of speech includes the right to disseminate lies, whereas in Germany it is ‘freedom of opinion’ that is protected. This does not cover statements that constitute incitement to hatred or defamation. Under German criminal law, you are guilty of defamation if, against your better knowledge, you assert or disseminate an untrue statement relating to another person that is likely to cause that person to become an object of contempt, to lower public opinion of them, or to threaten their reputation. Convictions carry a prison sentence of up to five years.8

Incitement to hatred, on the other hand, covers breaches of the peace through incitement of hatred against parts of the population, through calling for violent or repressive measures against them, or through attacking their human dignity through abuse, malicious slander or defamation. This offence includes publicly approving of, trivializing or denying the Holocaust. Convictions carry a prison sentence of between three months and five years.9

The incitement paragraph in its current form originated in an amendment to the criminal law code in 1960. It was a response by the Adenauer government to a series of anti-Semitic offences, including a series of arson attacks on synagogues.10 In subsequent decades, the section has been repeatedly changed and tightened. In 1994, the ‘simple’ denial of the Holocaust – i.e. denial without explicit identification with Nazi ideology – was also criminalized as incitement to hatred.11

In principle, it is to be welcomed that policy-makers are taking strong action against fake news and online hate speech. The law against incitement to hatred is intended to prevent the emergence of a climate of opinion where certain groups are aggressively excluded and where they could also become the victims of physical violence.12 In each case, it is incumbent upon public prosecutors and the courts to weigh up the constitutional right to freedom of opinion against the safeguarding of the public peace. However, it is precisely here that the NetzDG may do more harm than good.

Civil rights and internet activists have criticized the NetzDG for allegedly restricting freedom of opinion instead of protecting it. Normally, it is the job of public prosecutors or courts to determine whether specific statements constitute defamation or incitement to hatred. According to the new law however, this decision is now to be left to the social networks themselves. The government is therefore appointing these corporations, which are already parties to each case, as both judges and opinion police.

Given the sheer mass of decisions that they are now supposed to take, social media service providers are now confronted with a Herculean task: the German ministry of justice has estimated that social networks receive over 500,000 complaints annually, which among other things include hate speech. This is equal to the total number of crimes recorded every year for the whole of Berlin, for example.

As a rule, it is not a lawyer at Facebook who decides whether content is to be deleted, but a ‘content moderator’. In Germany, around 700 employees of the Bertelsmann subsidiary Arvato are responsible for moderating Facebook – for an hourly rate of just over the €8.84 minimum wage. Working under assembly line conditions, their job is to decide which posts – ranging from naked body parts to videos of sadistic violence – should and should not be removed from users’ newsfeeds. Until now, moderation was based on Facebook’s ‘community standards’, a secret catalogue containing several hundred rules regulating the deletion of content.13 From now on, however, Facebook will also have to consult the German penal code.

It is clear what the consequences will be. In order to avoid costs and lengthy court cases, not to mention the draconian fines that hang over the heads of their employees, social media companies will delete reported comments if in doubt. Conscientious assessment based on legal criteria is precluded.14 Given that most users would be reluctant to cover the costs of challenging the decision to remove their content, an arbitrary culture of deletion will emerge that contradicts the guarantee of freedom of opinion made in Article 5 of Germany’s Basic Law.15

A broad alliance of trade associations, internet campaign groups and civil rights organizations warned the Bundestag in advance not to entrust Facebook and similar companies with ‘the state’s task of deciding on the legality of content’. Neither should enforcement be allowed ‘to fail because of the judiciaries’ lack of resources’.16

For this is where the next problem lies. The German courts are already massively overstretched. According to the German Association of Judges, there is a shortage of around 2,000 judges and public prosecutors nationwide, with criminal justice particularly affected.17 Furthermore, there is still no public prosecution office specifically responsible for digital hate speech.18 It would need a great deal more time and money before the judiciary had sufficient resources to cope with the flood of reports.

Even if the resources were at some point to materialize, there is no guarantee that hate speech would actually disappear from the internet. For judges and public prosecutors also find it difficult to glean from the law on incitement what exactly constitutes a criminal act. They have to carefully examine ‘the context in which such statements are found and interpret them in the light of freedom of opinion, which also protects drastic, exaggerated and polemical statements’, explains the Berlin lawyer Ali B. Norouzi.19

The difficulty of contextual interpretation is also why many cases are dropped or suspended – including the case of Lutz Bachmann, founder of the Islamophobic and rightwing Pegida movement. After the sexual attacks on women in Cologne on New Year’s Eve 2015/16, the perpetrators of which included numerous refugees, Bachmann sold T-shirts with the slogan ‘rapefugees not welcome’. The Green Party politician Jürgen Kasek brought charges against Bachmann for incitement to hatred. Kasek argued that the slogan was presenting refugees per se as rapists and thus fuelling xenophobia. Leipzig’s public prosecutor did not share this opinion and interpreted the words literally to mean that refugees who commit rape are not welcome. In the public prosecutor’s eyes, there was no grounds for charges under the law on incitement to hatred.

Prosecuting incitement to hatred is even more difficult when offences are committed abroad – albeit for different reasons. According to the law on incitement, these are to be prosecuted no differently from domestic offences, regardless of whether the offenders are resident in Germany or not. Here, too, the condition is that such offences impact on the public peace in Germany and injure the human dignity of its inhabitants. As such, it suffices for criminal content to be accessible on the internet in Germany.20

In order for there to be a conviction, it must be proved beyond reasonable doubt that the statement is attributable to the accused. This is relatively easy to establish if the offender makes their statements in public with witnesses present. On the internet though, it is not so simple to prove whether a particular person is indeed the author of a statement – especially if the social network operator refuses to cooperate with the German prosecution authorities.21

This is another reason why open and organized rightwing extremism is increasingly migrating to the so-called Runet – above all to VK.com.22 The multilingual social network has become the new home of far-right groups banned from Facebook, which are now linking up with other rightwing movements from other countries.

VK.com was founded in October 2006 by the brothers Pavel and Nikolai Durov as VKontakte and looks like a Facebook clone. It has over 400 million Russian-language users, making it Russia’s most popular website and the fifth largest website worldwide.23 Close to 70 per cent of its visitors are from the Russian speaking space, however the network has also established itself in Germany, albeit on a far smaller scale. Around two per cent of visitors are from Germany, equating to around 14 million visits a year.24

On VKontakte, German Nazis, anti-Semites and racists can deny the Holocaust, mock victims of the Shoah and insult non-white people and Jews – all for a German public.25 While the social network does have conditions of use that forbid racist content, for example, it is extremely rare for posts to be deleted. In Germany, the political use of the swastika is forbidden, however on VK.com Nazis can use this and other symbols without fear of censorship. What is more, all this is publicly visible: unlike Facebook, most VK webpages are directly accessible without having to register and log in.

What was once the largest far-right Facebook page on the German-language web, Anonymous.Kollektiv, migrated to the Russian web in spring 2016.26 The page had over two million followers. By way of comparison, Germany’s two largest parties, the CDU and SPD, have just 300,000 followers between them.27 Alongside all manner of conspiracy theories and Russian propaganda, the Anonymous.Kollektiv page primarily contained incitements to racial hatred towards refugees. Refugees were described as a ‘sex-hungry, paedophile horde’ or ‘human trash’, for example. Videos were linked to that trivialized the Holocaust.

The site’s operator was probably Mario Rönsch.28 Though relatively little is known about him, Rönsch is known to come from Erfurt in Thuringia and is thought to have been an AfD member until around 2014. He has multiple previous convictions – including for fraud and delay in filing for insolvency. In the past, he liked to be seen alongside Jürgen Elsässer, a figurehead of the new Right scene and editor-in-chief of Compact magazine. The latter’s writing was also promoted on the Anonymous.Kollektiv website. When, after numerous complaints, Anonymous.Kollektiv’s Facebook page was blocked in May 2016, the page switched to VK.com.

The content on the new page mainly comes from the website anonymousnews.ru, which evidently belongs to Rönsch too. Until the beginning of 2017, he also ran Migrantenschreck.ru, an online weapons shop. Among other things on offer was the MS55 handgun, whose ‘muzzle energy’ could fell any ‘asylum supporter’. The German security forces had difficulty closing the shop down because its data was stored on a Russian server and the weapons were distributed from Hungary, where they are not forbidden. It was only when the German authorities directly pursued Rönsch’s German customers that he shut up shop.29

Rönsch has meanwhile gone underground. Although his VK page has ‘only’ 50,000 followers, it continues to play an important role for the far-right and rightwing populist community on VK.com. In addition to Anonymous.Kollektiv and Compact, VK.com also plays host to the ‘identitärian movement’, the AfD, the rightwing publisher Kopp, the portal ‘PI-news’ (‘Politically Incorrect News’), Pegida and its founder Lutz Bachmann, as well as numerous far-right brotherhoods and pro-Hitler groups.30

VK.com could, like Facebook, clamp down on racist and anti-Semitic hate speech – were the Kremlin not pulling the strings. Some time ago, the Russian government took over the social network. Quite how this happened is unclear. However it is certain that VKontakte founder Pavel Durov entered the sights of the Putin regime and was put under increasing pressure. In April 2014, he resigned as VKontakte’s CEO. ‘Complete control over VKontakte is being passed to Igor Sechin and Alisher Usmanov’, Durov wrote on his VK profile at the time.31

Both of the men he referred to have a high profile: Usmanov is a Russian oligarch born in Uzbekistan and the largest shareholder in the investment group Mail.ru. The group bought its first shares in VKontakte in 2010 and in 2014 became the network’s sole owner.32 Sechin is executive chairman of the oil company Rosneft and considered the second most powerful man in Russia. Like Usmanov, he is among Vladimir Putin’s closest advisers.33

According to Roman Dobrokhotov, the editor-in-chief of the Kremlin-critical portal The Insider, Russia’s domestic intelligence service, the FSB, probably has full access to the network’s infrastructure and content. However, the Russian government has little interest in sanctioning rightwing extremism; news critical of Putin is the much higher priority.34

The fact that the Kremlin appears to ignore rightwing extremists means that they can continue posting racist and anti-Semitic content on VK.com, insulting individuals and spreading fake news. Legal measures like the German law against incitement to hatred had already proved to be blunt weapons. The NetzDG will also fail to solve the problem of hate speech and fake news, but will instead push it into further corners of the internet. Far from falling silent after having been barred from Facebook, far-right movements have merely adapted their propaganda strategy to the new circumstances.

Far-right extremists in Germany increasingly use VK.com to radicalize users. The network also has a strong centripetal effect in the far-right scenes in other countries. At the same time, the far-right also continues to recruit supporters on Facebook, using various codes and caricatures to escape censorship.35 ‘Down with the West, down with the Lügenpresse [‘lying press’] and down with the “Jewbook” ’36 – these are the slogans with which they attract sympathizers on Facebook and then entice them onto VK.com. On the Russian network, the new Volksgenossen (or ‘comrades’) are then integrated into the groups organized by rightwing extremists.37

There is not much the German authorities can do other than to simply look on. Their hands are tied, since they receive no user data from VK.com. The most the German police can do is to pursue individual offences – as long as they can establish the identity of the author of illegal posts. They seldom succeed in doing so.

It is by no means only rightwing extremists in Germany who cluster around VK.com. The US far-right is also flocking to the social network: white supremacists, alt-right members and neo-Nazis. In the early 2000s, the Klu Klux Clan held dozens of meetings every year all over the country. In the interim, such gatherings have become rare. Instead, networking activities have largely shifted to the internet.38 This has prompted the sociologist and cyber-racism researcher Jessie Daniels to warn of the formation of a ‘transnational white class’: the World Wide Web not only allows rightwing extremists from all countries to recruit largely anonymously and with few resources, but also enables them to network with like minds all over the world.39

The fatal consequences of this development were to be seen in June 2015, when Dylann Roof shot nine people, all of them African Americans, at a bible class in a church in Charleston, South Carolina. As far as is known, Roof maintained no contact at all with radical right movements in his neighbourhood. Instead, he had been radicalized on the internet and regularly visited the website ‘Council of Conservative Citizens’. The Council propagates the idea of white domination and see black people as a ‘retrograde species of humanity’. Roof obviously also regularly read and commented on content in The Daily Stormer – the ‘world’s biggest hate site’ (Heidi Beirich).40

In order to halt hate speech and strengthen measures against far-right networking, alongside opposition and engagement on the part of civil society, one thing is needed above all: cooperation between host servers and between national regulatory authorities. This is both possible and effective, as attempts by The Daily Stormer to find a digital hiding place show. The portal only managed to operate under a Russian domain name for a short time following the violence in Charlottesville. After just a few hours, the Russian supervisory authority Roskomnadzor denied the site permission to register in their part of the internet – public pressure had obviously become too great.

The Daily Stormer had no other choice than to migrate to the dark web – the internet’s underworld. While this offers anonymity and protection, content in this part of the internet cannot easily be found. Search engines like Google do not link to it; instead, you need special software to gain access. Rightwing hate speech that publicly calls for or legitimates violence now encounters at least a significant hindrance.

Clearly, in legal terms, a distinction needs to be made between propaganda and hate speech. In Germany, for example, propaganda is illegal only if it pertains to an organization that has previously been ruled to be anti-constitutional. In practical terms, however, the two are virtually equivalent. For definitions of propaganda and hate speech, see: ‘Was ist Propaganda?’ [‘What is propaganda’], www.bpb.de, 1 October 2011, as well as Jörg Meibauer (ed.), Hassrede/Hate Speech: Interdisziplinäre Beiträge zu einer aktuellen Diskussion [‘Hate speech: Interdisciplinary contributions to a topical debate’], Gießen 2013, http://geb.uni-giessen.de/geb/volltexte/2013/9251/pdf/HassredeMeibauer_2013.pdf.

Cf. Bundeskriminalamt, Polizeiliche Kriminalstatistik 2017 [Federal Criminal Police Office, Police criminal statistics 2017].

Hate speech directed at migrants is also frequently accompanied by violence. In 2015 alone, the authorities counted thousands of attacks on refugees’ accommodation. Cf. ‘Anschläge auf Asylunterkünfte haben sich 2015 vervierfacht’ [‘Attacks on asylum seekers’ accommodation quadruple in 2015’], www.spiegel.de, 9 December 2015.

Jugendschutz.net, Zahlen zu Rechtsextremismus online 2016 [Online rightwing extremism in figures 2016], February 2017.

Fake news is deemed illegal if it violates a person’s rights, that is, when it constitutes insult, defamation or slander, or where it serves as propaganda for an anti-constitutional organization or otherwise represents a breach of the peace. Hate speech is deemed illegal where, in addition to offences involving insult, incitement to hatred also comes into play. For example, when a refugee is subjected to abuse, or hatred is incited or violence provoked against him or her. Cf. https://www.tagesschau.de/inland/fake-news-politik-101.html

Cf. the speech by Federal Minister of Justice and Consumer Protection Heiko Maas at the 22nd German Judge and Public Prosecutor Day, 5 April 2017.

The network operators themselves can take decisions regarding content that is not unequivocally illegal, in other words carry out a form of voluntary self-regulation. Any such body must be government approved and overseen by the Federal Ministry of Justice. Cf. Löschorgie droht: Bundestag beschließt Netzwerkdurchsetzungsgesetz [‘An orgy of deletion looms: Bundestag passes Network Enforcement Law’], www.heise.de, 30 June 2017.

Sect. 130 para. 1 Strafgesetzbuch (Criminal Code – StGB).

See: Bundesgesetzblatt 2011, Part I, No. 11, published 21 March 2011, p. 418.

‘Incitement to hatred’ had previously mainly applied to communist agitation, criminalizing the incitement to class war. See: Wissenschaftliche Dienste des Deutschen Bundestages, ‘Volksverhetzung’ [German Bundestag scientific service, ‘Incitement to hatred’], 1 October 2009, www.bundestag.de.

On 13 April 1994, the Federal Constitutional Court issued a judgement stating that Holocaust denial did not fall under the fundamental right to freedom of opinion under article 5, para. 1 GG. Holocaust denial concerns an untrue statement of fact and cannot therefore contribute to the formation of opinion as constitutionally defined. Section 130 of the criminal was amended accordingly in October 1994. See. BVerfGE 90, 241, 1 BvR 23/94, para. no. 40.

A breach of the peace does not actually have to occur. Under Section 130, an act can already be prosecuted if it constitutes a potential danger that is likely to cause a breach of the peace.

Cf. ‘Im Netz des Bösen’ [In the net of evil’], sueddeutsche.de, 15 December 2016 as well as: ‘Facebooks Gesetz: die geheimen Lösch-Regeln’ [Facebook law: The secret deletion rules], sueddeutsche.de, 16 December 2016.

The UN’s special rapporteur on Freedom of Opinion David Kaye has also criticized the NetzDG. See: www.heise.de, 9 June 2017.

See: ‘Mal eben den Rechtsstaat outsourcen’ [‘Now just outsource upholding the rule of law’], www.zeit.de, 30 June 2017.

See: deklaration-fuer-meinungsfreiheit.de.

See: ‘Droht der deutschen Justiz der Kollaps?’ [Is the German judiciary facing collapse?], augsburger-allgemeine.de, 7 April 2017.

On the notion of hate crime, see: ‘Fragen zur polizeilichen Lagebilderstellung von Anschlägen gegen Flüchtlingsunterkünfte’ [‘Questions concerning the police’s perspective on attacks against refugee accommodation’], BT-Drs. 18/7000, answer to question 22b, p. 17.

Was ist Volksverhetzung [‘What is incitement to hatred’], www.anwaltauskunft.de, 25 July 2014, updated 18 January 2017.

Cf. BGH 1 StR 184/00 – judgement of 12 December 2000.

The IP address can be used to trace a statement back to the computer. However, several persons can have access to the computer to which that IP address belongs. For a conviction to stand, it must be possible to name the individual perpetrator. Social network accounts can also be hacked. The public prosecutor must be able to conclusively refute such arguments in defence.

According to Saxony’s Federal Agency for Internal Security, rightwing extremists have increasingly switched to VK.com in the past few years. See: www.verfassungsschutz.sachsen.de/download/VSB2016_Vorabfassung.pdf.

By contrast, the number of Facebook accounts in Russia in 2016 stood at around 21 million. Cf. Kevin Limonier, ‘Silicon Moskau’ [‘Silicon Moscow’], in: Le Monde diplomatique 8/2017, p.1

‘Facebook als Teil der „Systempresse“: Rechte machen russisches Network VK.com zum Nazi-Netz’, meedia.de, 10.2.2016, online at: http://meedia.de/2016/02/10/facebook-als-teil-der-systempresse-rechte-machen-russisches-network-vk-com-zum-nazi-netz; ‘VK: Die russische Facebook-Alternative für Neonazis, Verschwörungstheoretiker und Internethetzer, belltower.news, 13.7.2017. online at: http://www.belltower.news/artikel/vk-die-russische-facebook-alternative-f%C3%BCr-neonazis-afd-4782.

Around 2,000 nationalist groups are estimated to be active on VK.com; 17,000 members are registered with the largest three. Sarah Kaufman, ‘Sorry, Everyone: The “Miss Hitler” Pageant Has Been Canceled’, vocativ.com, 20.10.2014, online at: http://www.vocativ.com/world/russia/miss-hitler-pagent; Olga Khazan, ‘American Neo-Nazis Are on Russia’s Facebook’, www.theatlantic.com, 20.5.2016, online at https://www.theatlantic.com/technology/archive/2016/05/extremist-groups-vkontakte/483426/.

The Anonymous network of hackers, which campaigns against censorship and surveillance, has repeatedly distanced itself from this eponymous hate-speech page.

As of September 2017.

Rönsch has sworn that he is neither the site’s administrator nor its operator. However there are several witness statements asserting the opposite. And Rönsch has probably given himself away on Facebook anyway. Cf. ‘Die dunklen Seiten des Mario R.’ [‘The dark side of Mario R.’], www.focus.de, 30 May 2016.

See: ‘Das Ende des Online-Waffenhandels “Migrantenschreck”’ [‘The end of the online weapons dealer “Migrantenschreck”’], www.stern.de, 3 February 2017.

See: for example vk.com/id345862506, vk.com/afd_rus, vk.com/koppverlag, vk.com/pinewsnet.

Up until 2013, Mail.ru also held shares in Facebook.

Cf. ‘Igor Sechin: Russia’s second most powerful man’, www.ft.com, 28 April 2014.

Cf. ‘Im VK-Netz des Druiden’ [‘Inside the Druid's VK net’], www.tagesschau.de, 28 January 2017.

See: Jugendschutz.net, ‘Rechtsextreme rekruitieren auf allen Kanälen’ [‘Rightwing extremists are recruiting on all channels’], February 2017

Facebook founder Mark Zuckerberg is Jewish.

Russisches Netzwerk VK.com: Sammelbecken für Facebook-Hetzer’ [‘Russian network VK.com: Where Facebook inciters of hatred gather’], www.deutschlandfunk.com, 11 February 2016.

See: Heidi Beirich, ‘White homicide worldwide’, Southern Poverty Law Center’s Intelligence Report, www.splcenter.org, summer 2014.

Cf. ‘American neo-Nazis are on Russia’s Facebook’, www.theatlantic.com, 20 May 2016.

Keegan Hankes, Dylann Roof May Have Been A Regular Commenter At Neo-Nazi Website The Daily Stormer, www.splcenter.org, 21.6.2015.

Published 29 September 2017

Original in German

Translated by

Ben Tendler

First published by Eurozine

© Daniel Leisegang / Eurozine

PDF/PRINTSubscribe to know what’s worth thinking about.

Lev Gudkov on the roots of fear in Russian society; translation as survival strategy in Soviet Kyiv; why the EU needs to get real on Belarus; what the Armenia–Iran relationship means for the South Caucasus.

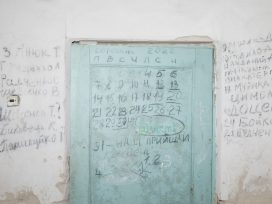

Russia’s full-scale invasion of Ukraine is entering its fifth year. With peace negotiations at a standstill, traumatized communities face a tough question: What does it mean to memorialize a war when its end is nowhere in sight? War crime survivors from Yahidne are actively engaging in how their mass confinement is remembered.